Learning in Public

Introduction & Series Overview

Series: My Journey to Building AI Agents

Audience: Senior Full Stack Developers · Solution Architects · Tech Leads

Come along as I figure this out 😅

Gartner predicts 40% of enterprise applications will embed AI agents by the end of 2026.

I've been watching this space accelerate, and honestly? The learning curve feels overwhelming. There's so much to absorb—frameworks, patterns, terminology—and I decided the best way to learn is to do it publicly.

This series is my personal journey to understand AI agents from the ground up. I'm not an expert. I'm a developer who wants to build these systems and is willing to share my stumbles, discoveries, and "aha" moments along the way. Over the next several weeks, I'll work through official documentation from Anthropic, Google Cloud, and other sources, documenting what I learn as I go.

If you're also trying to wrap your head around AI agents, let's figure this out together. Bookmark this overview, follow along, and feel free to share your own insights in the comments.

The Core Philosophy I'm Adopting

Before diving in, I found a principle from Anthropic that resonated with me:

"Start with simple prompts, optimize them with comprehensive evaluation, and add multi-step agentic systems only when simpler solutions fall short." — Anthropic, Building Effective Agents

This is going to be my guiding principle. I'll resist the temptation to jump straight into complex multi-agent systems before I actually understand the fundamentals. Simple first.

The Learning Roadmap

Here's the path I've mapped out for myself—12 weeks across three phases:

| Phase | Focus | Duration | What I'll Explore |

|---|---|---|---|

| Phase 1 | Foundations | Weeks 1-3 | LLMs, Prompt Engineering, APIs |

| Phase 2 | Core Capabilities | Weeks 4-6 | Tools, Context, RAG |

| Phase 3 | Agent Architecture | Weeks 7-12 | Patterns, Workflows, Memory, Evaluation, Multi-Agent, Production |

Phase 1: Foundations

Starting with the basics. I need to build solid mental models before jumping into agent-specific topics.

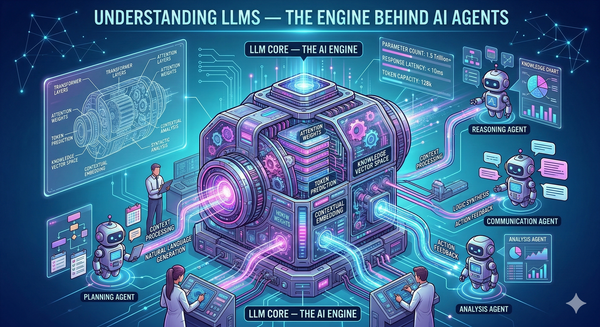

1. Understanding LLMs

I want to really grasp how transformers process tokens, why context windows matter, and what determines model capabilities. Things like the difference between completion and chat models, temperature effects, and why hallucinations happen. This feels essential for debugging agent behavior later.

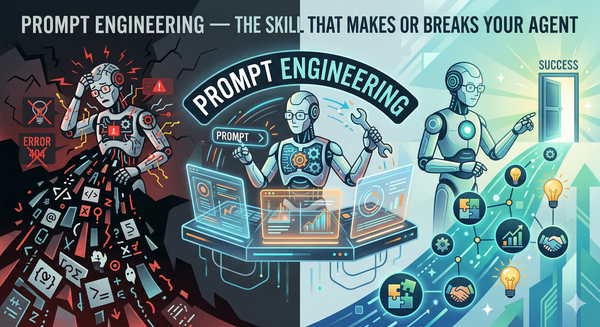

2. Prompt Engineering

I've read that developers often "spend more time optimizing tools than the overall prompt." I want to explore zero-shot, few-shot, and chain-of-thought patterns deeply. Structured output techniques and system prompt design seem crucial for making agents predictable.

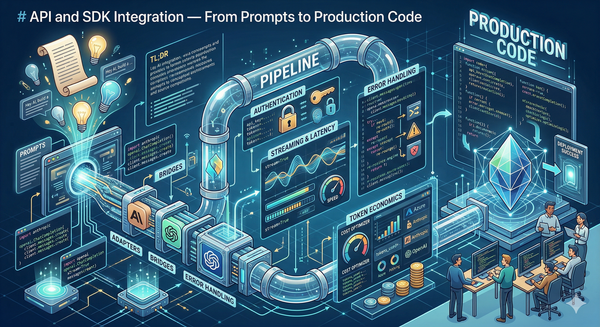

3. API and SDK Integration

Time to get my hands dirty connecting to Claude, OpenAI, and Azure endpoints. I'll work through authentication, rate limiting, retries, and streaming. Token economics and cost optimization are things I know I'll care about at scale.

Phase 2: Core Capabilities

This is where I'll start equipping LLMs with abilities that transform them into actual agents.

4. Tool Use and Function Calling

From what I've read, tools enable Claude to interact with external services by specifying their exact structure. I want to understand Anthropic's guidance on designing tool interfaces—formatting tools to match how models naturally see data, minimizing overhead, and writing good documentation.

5. Context Engineering

The Claude Agent SDK treats context management as fundamental, apparently. I'll explore hierarchical information organization, agentic search patterns, and automatic compaction. Context seems to be the bridge between knowledge and action.

6. RAG System Design

Building retrieval pipelines that ground agent responses in real data. I need to understand chunking strategies, embedding models, vector database options, and hybrid search. When does RAG solve problems versus when do agents need something else?

Phase 3: Agent Architecture

This is where it gets exciting—designing systems where LLMs actually direct their own processes.

7. Agentic Design Patterns

Google Cloud identifies sequential, loop, and parallel as foundational patterns. I want to master ReAct (Reason-Act-Observe) for dynamic tasks and Plan-and-Execute for efficient multi-step reasoning. Choosing the right pattern for the right task seems critical.

8. Workflow Orchestration

Anthropic distinguishes "workflows" (LLMs orchestrated through predefined code paths) from "agents" (LLMs dynamically directing themselves). I'll dig into prompt chaining, routing, parallelization, and human-in-the-loop checkpoints.

9. Memory Systems

Agents apparently need short-term, medium-term, and long-term memory for autonomous operations. I want to implement conversation buffers, session persistence, and retrieval-augmented memory. How do you build systems that learn without catastrophic forgetting?

10. Evaluation Frameworks

The Claude Agent SDK prescribes a verification loop: rule-based feedback, visual inspection, and LLM-as-judge strategies. I need to figure out how to build evaluation harnesses that catch failures before production.

11. Multi-Agent Systems

Google's patterns include coordinator, hierarchical decomposition, and swarm architectures. Starting with the supervisor pattern seems like a good entry point—central orchestration with specialized workers.

12. Production Deployment

The gap between demos and production is real. I'll explore sandboxing, observability, graceful degradation, and cost controls. What do companies actually do when running agents at scale?

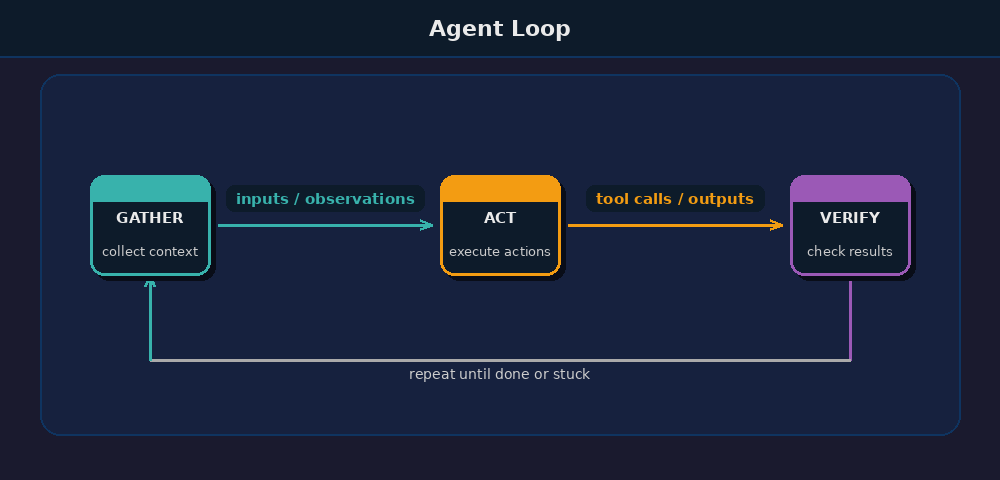

The Agent Feedback Loop

One pattern I keep seeing in the research is this core loop:

| Phase | Action | Key Question |

|---|---|---|

| Gather | Search files, fetch APIs, semantic search, subagents | Does the agent have sufficient context? |

| Act | Execute tools, run scripts, generate code, call MCPs | Can the agent take the required action? |

| Verify | Apply rules, inspect outputs, use LLM judgment | Did the action succeed? What failed? |

This loop, from the Claude Agent SDK, repeats until task completion or human intervention. I'll be referencing this throughout the series.

What I'll Cover

| # | Topic | Phase | What I'm Curious About |

|---|---|---|---|

| 1 | Understanding LLMs | Foundations | How do these models actually work? |

| 2 | Prompt Engineering | Foundations | What makes prompts reliable? |

| 3 | API Integration | Foundations | How do I connect to production systems? |

| 4 | Tool Use | Capabilities | How do agents interact with the world? |

| 5 | Context Engineering | Capabilities | How do agents manage information? |

| 6 | RAG Systems | Capabilities | How do agents use external knowledge? |

| 7 | Agentic Patterns | Architecture | What patterns actually work? |

| 8 | Workflow Orchestration | Architecture | How do multi-step agents coordinate? |

| 9 | Memory Systems | Architecture | How do agents remember? |

| 10 | Evaluation | Architecture | How do I know if it's working? |

| 11 | Multi-Agent Systems | Architecture | How do agents collaborate? |

| 12 | Production Deployment | Architecture | How do I ship this safely? |

What I've Learned So Far

A few things that stood out from my initial research:

- Start simple. Anthropic's core message: add complexity only when simpler solutions fail. Most applications probably need optimized single-model calls, not agent swarms. I'll try to remember this.

- Tools matter more than I thought. Designing tool interfaces is apparently as important as designing user interfaces. Poor documentation causes more failures than weak models.

- Patterns exist for reasons. Google documented eight multi-agent patterns. Each solves specific problems. I shouldn't just pick one randomly.

- The loop is everything. Gather-Act-Verify seems to separate working agents from demos. Verification isn't optional.

- Evaluation enables trust. Rule-based checks, visual inspection, and LLM judgment together form a complete strategy. Skip evaluation, skip production.

What's Next

In the next article, I'll dive into Understanding LLMs—exploring transformer architecture, attention mechanisms, and the operational characteristics that determine how agents think and fail.

I'm genuinely curious about this stuff 🤓

*This is Article 1 of 12 in my AI Agents learning journey.*

Resources I'm Using

- Building Effective Agents — Anthropic

- Building Agents with the Claude Agent SDK — Anthropic

- Choose a Design Pattern for Your Agentic AI System — Google Cloud

- AI Agent Architecture: Build Systems That Work — Redis

*This is Article 1 of 12 in my AI Agents learning journey.*